Adobe just plugged Google’s Gemini 2.5 Flash Image model, nicknamed “Nano-Banana,” into Firefly and Adobe Express, informs this Adobe blog post. It’s live now in Firefly’s Text-to-Image and Firefly Boards (beta), and drops in Express on September 1.

Here’s how this amps up the workflow:

- One workflow, more creativity. Start in Firefly to dial in a stylized sequence of visuals, then move them into Express—animate, resize, add captions, publish—all without redoing work.

- Faster ideation, sharper delivery. Marketers and SMB creatives can remix campaigns on the fly: swap backgrounds, inject new elements, and generate variations that stay on-brand in minutes.

- Prototypes to production. Visual designers can sketch concepts or character ideas in Firefly, then seamlessly refine them in Photoshop or Illustrator for professional polish.

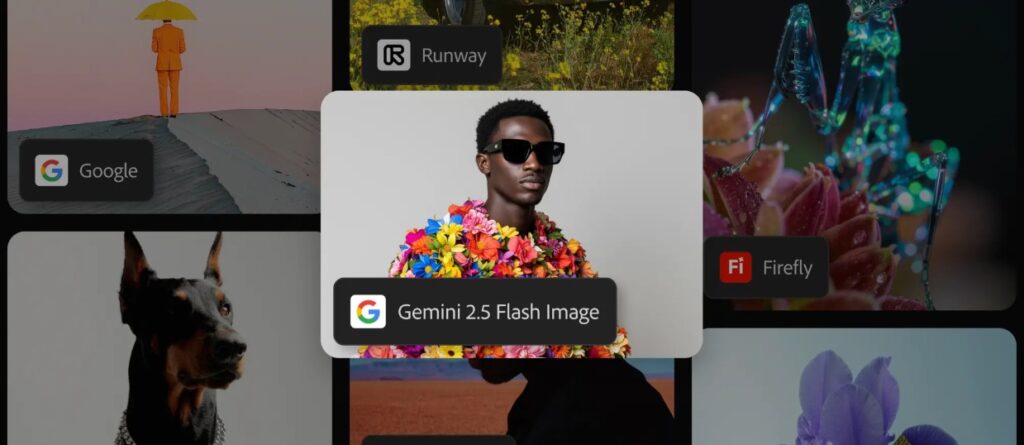

Adobe already lets users tap models from OpenAI, Runway, Google, and more—all within one app, no hopping around between tools. Users pick the right model for the job and still use the Creative Cloud pipeline.

Plus, Adobe keeps the content safe: nothing users generate or upload fuels AI training. And AI outputs come with Content Credentials for traceability.

Free Firefly users get 20 Gemini-powered generations. Paid Firefly and Creative Cloud Pro subscribers have unlimited access through September 1. Express users follow on September 1.

It streamlines creativity, from rapid visual ideation to polished, campaign-ready assets, while giving transparency and control. Tools aligned in one workflow, less friction, more creative bandwidth.