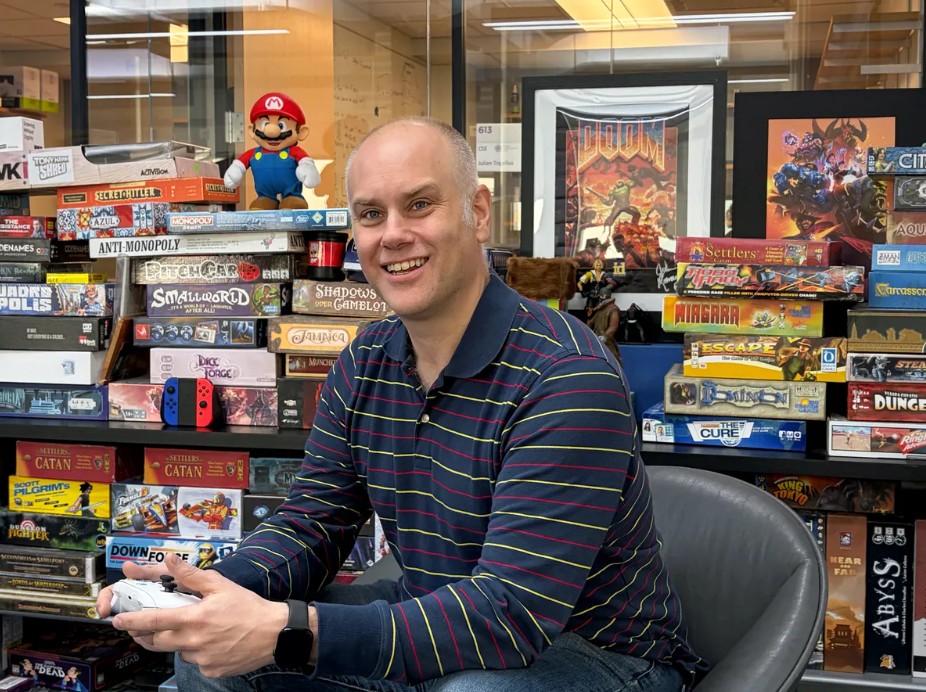

Large language models are proving useful in video game development, but their ability to actually play games exposes fundamental limitations. The IEEE Spectrum article explores this gap through the work of Julian Togelius, who studies how AI systems perform in game environments.

LLMs excel at generating content. They can write code, design levels, and produce dialogue, making them valuable tools for game development workflows. This aligns with broader trends in game AI, where generative systems are increasingly used to automate storytelling and asset creation.

However, when asked to play games, these same models struggle. Unlike reinforcement learning systems that learn through trial and error, LLMs rely on text-based reasoning. They often fail at tasks requiring spatial awareness, long-term planning, or consistent rule-following. Even simple games can expose these weaknesses, as models make logical mistakes or lose track of objectives.

This limitation highlights a deeper issue: LLMs do not truly “understand” game environments. They generate responses based on patterns in data rather than grounded interaction with the world. As a result, they can describe strategies or explain rules but cannot reliably execute them in dynamic settings.

The contrast between writing and playing games underscores two different kinds of intelligence. Content generation depends on language and pattern recognition, areas where LLMs perform well. Gameplay, by contrast, requires embodied reasoning, including timing, perception, and decision-making under uncertainty.

Researchers see games as valuable testing grounds for AI because they combine structured rules with complex environments. If an AI system cannot handle these controlled scenarios, it raises questions about its ability to function in real-world situations that demand similar reasoning skills.

The article suggests that progress will depend on combining language models with other approaches, such as reinforcement learning or world models. LLMs may contribute to higher-level reasoning, but they are unlikely to succeed alone in interactive environments.

Ultimately, the research reframes expectations. LLMs are powerful tools for creativity and design, but their limitations in gameplay reveal that true general intelligence remains an unsolved problem.