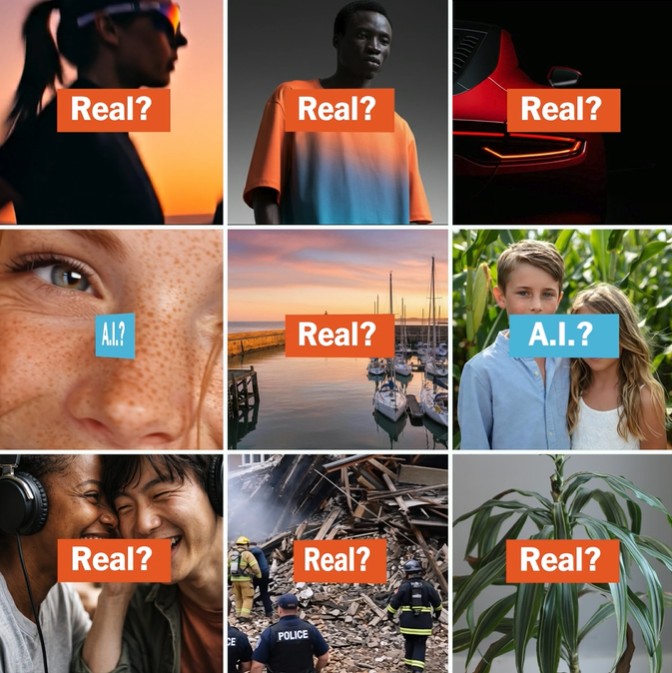

As AI-generated images, video, and audio grow increasingly realistic, a new class of online tools claims to distinguish authentic media from synthetic fabrications. A wide-ranging set of tests conducted by The New York Times, covering more than a dozen detection platforms and over 1,000 scans, suggests those claims deserve caution. While many detectors successfully flagged basic AI-generated content, none proved reliable enough to serve as a definitive arbiter of truth.

Most tools performed well when analyzing straightforward fakes, such as lifelike portraits created from simple prompts. These images often contained subtle markers, including overly perfect lighting or anatomical distortions, that detectors were trained to recognize. Yet performance varied. Even ChatGPT was unable to identify an AI-generated image it had just produced. Developers acknowledged that no system can guarantee perfect accuracy, describing detection as an ongoing contest in which generators continually improve.

More complex or blended media exposed weaknesses. Detectors struggled with fictional landscapes that lacked obvious human subjects, suggesting many systems are optimized for faces due to anti-fraud and security applications. Video analysis remains limited; only a handful of platforms can evaluate moving images or live feeds, and results were inconsistent. Audio detection proved comparatively stronger. Tools from Sensity and Resemble.ai, for instance, identified synthetic voices with high confidence, even when files were altered.

Encouragingly, detectors were generally better at recognizing authentic images and videos, reducing the risk of falsely labeling real content as fake. Still, AI-edited images posed a serious challenge. Subtle alterations, such as digitally added smoke in a real photograph, often went unnoticed. The findings underscore that detection tools can support verification efforts but must be paired with traditional reporting and contextual research to avoid misplaced certainty in an era defined by digital ambiguity.