Moore’s Law began as a simple observation by Gordon Moore that the number of transistors on integrated circuits would double roughly every two years. This trend shaped the semiconductor industry for more than half a century, driving consistent improvements in computing speed, cost, and energy efficiency and enabling innovations from personal computers to data centers.

Today, physical and economic limits are forcing a rethink of that rule, tells this article from The Conversation. The atomic scale of matter, energy dissipation, and rising manufacturing costs mean that shrinking transistors further is no longer the automatic path to performance gains it once was. The predictable rhythm of Moore’s Law has faded not because innovation has stalled, but because the assumptions that once made it nearly automatic no longer hold.

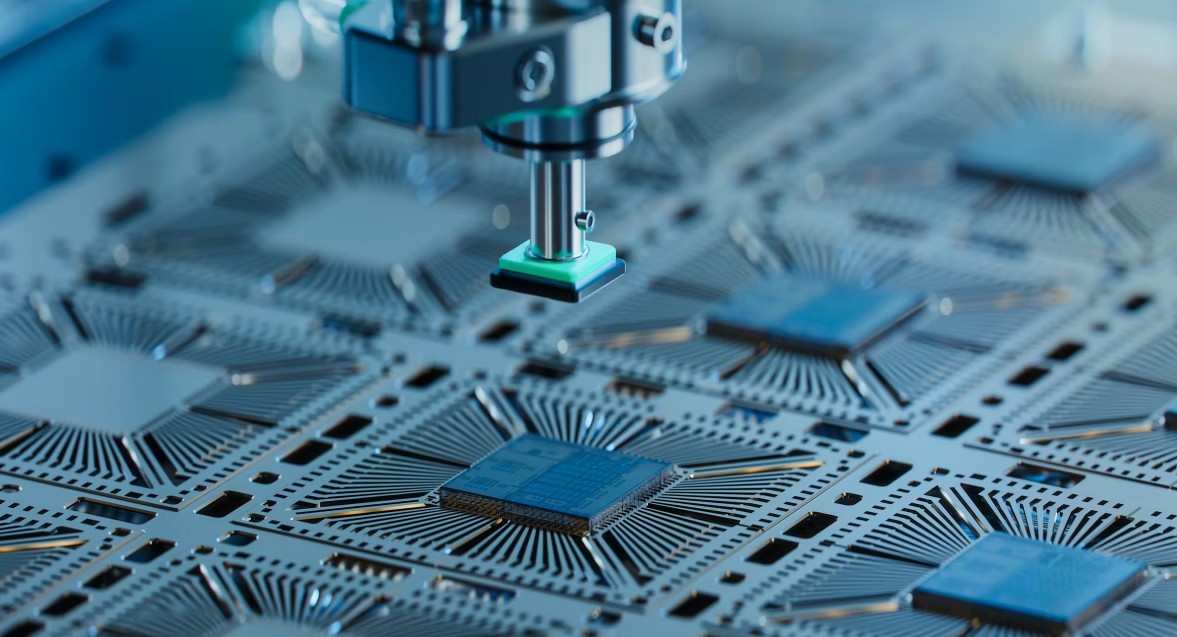

The industry response isn’t a single replacement for Moore’s Law. Chip designers are combining multiple approaches. One strategy is the use of novel materials and transistor designs that reduce waste and manage power better. Another is three-dimensional chip stacking and new architectural layouts that bring components closer together or layer them vertically, reducing the physical limits on performance improvements. Specialized accelerators, such as GPUs and domain-specific processors, are taking on workloads that general-purpose CPUs can’t efficiently handle. These shifts yield gains that are more uneven and task-specific compared with the broad doubling of performance the old rule suggested.

Software and system-level design are also part of the equation. With raw hardware scaling slowing, more performance gains come from optimizations in software, parallel computing models, and integration between hardware and software stacks. And emerging paradigms such as quantum computing or neuromorphic architectures hint at fundamentally different ways to compute that don’t rely on packing more transistors onto silicon.

In short, the era when chip performance reliably doubled every two years is ending. The future lies in a mix of improved materials, new architectures, and entirely new computing models rather than a single scaling law.