A recent article from Popular Science explores an unconventional idea gaining traction in human–computer interaction research: programming without a screen. As artificial intelligence, spatial computing, voice systems, and wearable interfaces evolve, researchers and developers are beginning to question whether software creation must remain tied to keyboards and monitors. The article examines experimental systems that could fundamentally reshape the act of coding itself.

For decades, programming has depended on highly visual workflows involving text editors, terminals, and graphical debugging environments. The article argues that this model may not be permanent. Advances in AI-assisted coding and natural-language interfaces are opening pathways toward more conversational and embodied forms of software development. Developers may eventually interact with code through speech, gestures, augmented reality environments, or even physical movement rather than traditional typing alone.

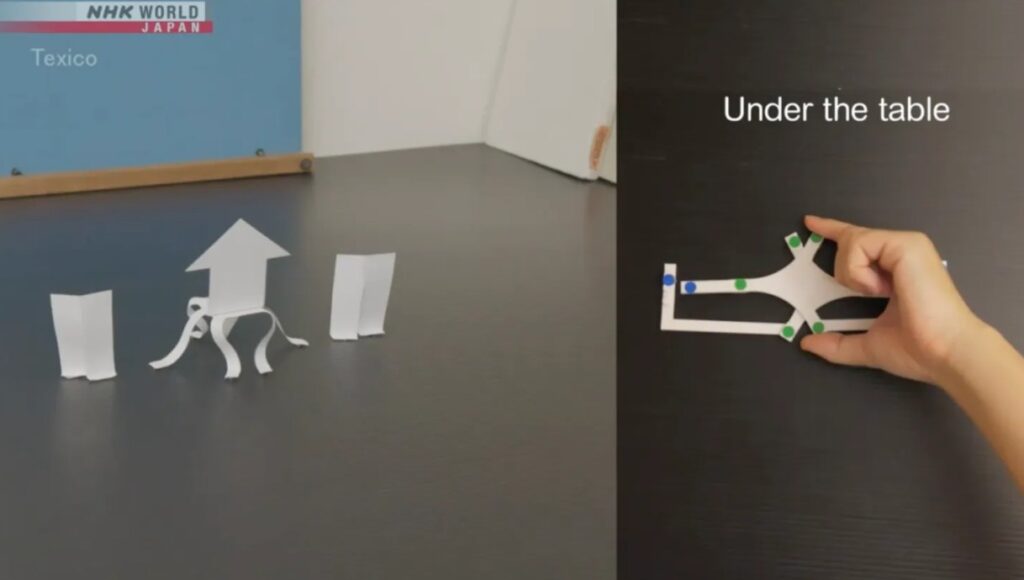

Researchers highlighted in the article are exploring auditory programming systems that communicate software structures through sound, allowing programmers to navigate logic using spatial audio cues. Other experiments involve wearable devices, virtual workspaces, and room-scale interfaces that transform programming into a more immersive activity. Some concepts resemble conducting an orchestra or manipulating physical objects rather than editing lines of text.

The article also notes that artificial intelligence is accelerating the transition. Large language models can already generate, explain, and refactor code using plain-language instructions. As AI systems become more capable, programming may shift from writing syntax manually to directing higher-level intentions and validating outputs. In such workflows, screens could become less central because developers would spend more time guiding systems conceptually instead of handling low-level implementation details.

Still, researchers caution that screens are unlikely to disappear entirely. Visual representation remains highly efficient for understanding complex systems, debugging interactions, and organizing large software architectures. Instead of replacing screens outright, many experts envision hybrid environments where voice, AI, spatial interfaces, and conventional displays coexist.

Ultimately, the article frames programming as an evolving human-machine collaboration rather than a fixed technical practice. As interfaces become more adaptive and intelligent, the future developer may work across physical and digital spaces in ways that look very different from today’s desk-bound coding culture.