The IEEE Spectrum article examines the idea of building orbital data centers that host compute infrastructure above Earth, driven by interest from Silicon Valley heavyweights such as Elon Musk, Jeff Bezos, Sundar Pichai, and Nvidia’s leadership. The central appeal of orbiting server farms comes from the physics of space: continuous sunlight for power generation and the vacuum that enables radiative cooling without Earth’s atmospheric limitations. In this view, orbital platforms could support software and services such as artificial-intelligence workloads without needing terrestrial power grids or massive cooling systems.

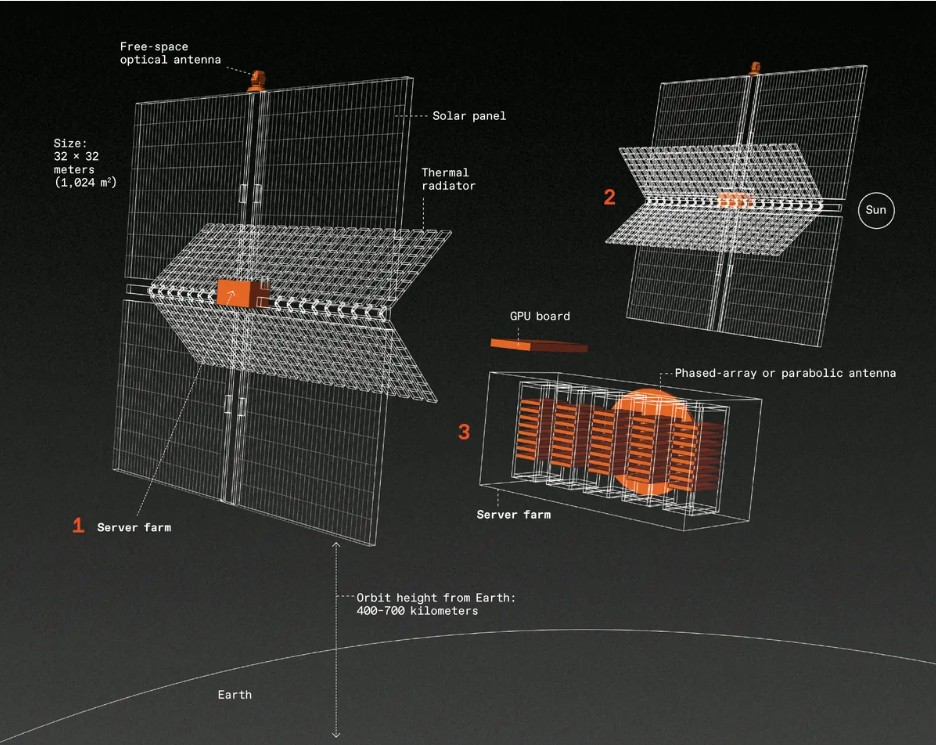

The article emphasizes the scale of such systems. A conceptual satellite might carry photovoltaic arrays covering more than a thousand square meters, producing hundreds of kilowatts for processors and networking equipment. To match the capacity of a modern gigawatt-class terrestrial data center, thousands of such satellites would need tight networking in low Earth orbit.

Cost is the crux of the challenge. Engineering, launching, networking, and maintaining a constellation of thousands of satellites has an astronomical price tag that could surpass the cost of building and operating equivalent terrestrial facilities by a factor of about three over five years. These figures include launch costs, the hardware itself, and operational logistics in the orbital environment.

In addition to cost, practical and technical obstacles abound. Space hardware must withstand launch stresses, radiation, and long durations in vacuum. Heat management in orbit requires large radiator surfaces, which increase launch mass. On-orbit servicing and upgrades remain nascent capabilities, meaning failed hardware could become stranded or contribute to space debris.

The article doesn’t dismiss the concept outright, but it positions orbital data centers as a speculative, high-investment idea that blends visionary appeal with real constraints in economics and space systems engineering.