Autonomous vehicles have made impressive strides in recent years, moving from simple lane-keeping systems to cars that can navigate city streets, recognize pedestrians, and respond to traffic control devices with reasonable success. Still, the most difficult challenges for driverless cars are not the everyday situations they encounter most often but the rare, unpredictable “long-tail” events that fall outside the patterns seen in training data. These include unexpected construction zones, erratic road user behavior, and emergency vehicles appearing suddenly—scenarios that defy simple pattern recognition and require judgment under uncertainty. Traditional sensor systems and rule-based software pipelines, where separate modules detect objects, predict motion, and plan responses, can struggle in these cases because they are designed to react to known patterns rather than reason through unfamiliar ones.

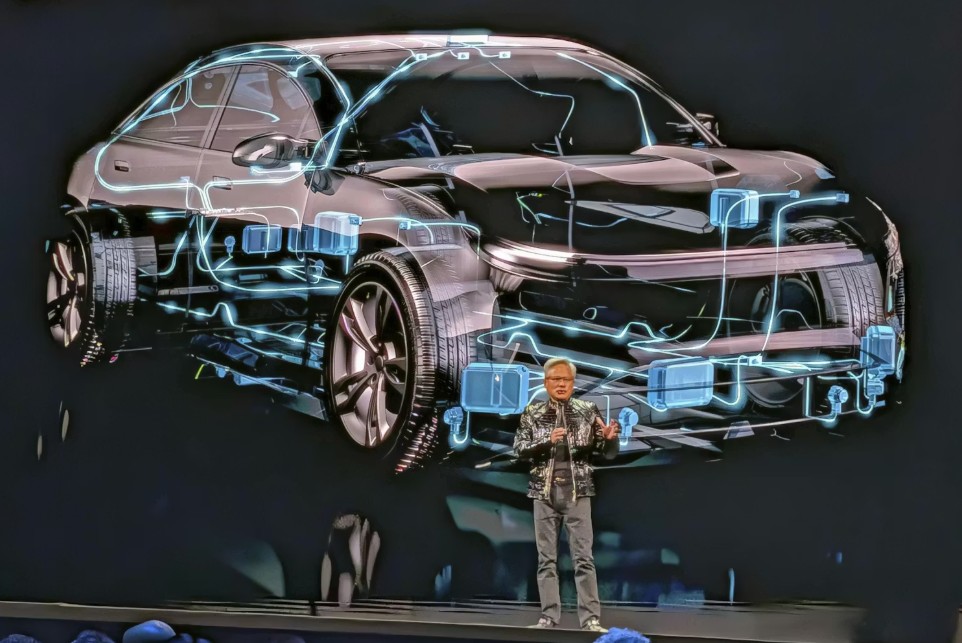

The Conversation states that to overcome this limitation, researchers are turning to a class of artificial intelligence models known as vision-language-action (VLA) systems. These models integrate visual perception with internal reasoning processes. Instead of merely identifying objects on the road, a VLA model forms an internal “thought process” that considers multiple possible interpretations of a scene before generating actions such as steering or braking. This shift would let autonomous systems reason more like humans, weighing uncertainty and choosing behavior accordingly. An example gaining attention is Nvidia’s open-source Alpamayo platform, which combines extensive driving datasets with powerful simulation tools and reasoning-oriented AI techniques. Alpamayo supports “intermediate reasoning traces,” which make it possible to trace not just what the system sees but why it made a particular decision—an important step toward transparency and safety in autonomous driving.

This approach does not immediately produce fully self-driving consumer cars, but it represents a significant evolution from perception-only systems toward ones capable of dealing with the most challenging aspects of real-world driving. If reasoning-based models continue to improve, they could help autonomous vehicles cope with the unpredictable moments that currently limit their deployment on public roads.