This Live Science article discusses the history of computers that stretches across centuries, beginning long before silicon chips or keyboards and evolving through major innovations that reshaped science, business, and everyday life. Early tools for calculation included simple devices such as the abacus, used for millennia to make arithmetic easier. In the 17th century, thinkers such as John Napier and William Oughtred developed methods such as logarithms and the slide rule, while inventors such as Blaise Pascal and Gottfried Wilhelm Leibniz built early mechanical calculating machines that automated addition, subtraction, and eventually multiplication and division. These early milestones set the stage for programmable computation.

In the 19th century, Charles Babbage conceptualized the Difference Engine and later the more ambitious Analytical Engine, mechanical designs that embodied fundamental ideas of modern computing. Working alongside Babbage, Ada Lovelace wrote what some consider the first computer program, recognizing that machines could follow algorithmic instructions.

The 20th century accelerated progress with the development of large electronic machines. Devices such as the Differential Analyzer and theoretical models such as Alan Turing’s universal machine laid the groundwork for general-purpose computing. Pioneers such as Konrad Zuse, John Atanasoff, Clifford Berry, and the team behind ENIAC helped transition computing from mechanical gears to electronic circuits. These innovations enabled the first commercial computers such as UNIVAC and ushered in a new era of automation and data processing.

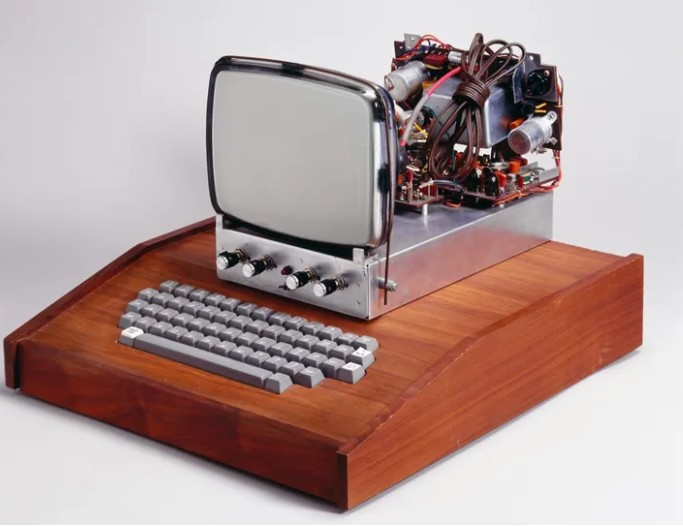

Mid-century breakthroughs such as the transistor and later the integrated circuit shrank computers while boosting power, leading to programming languages such as COBOL and FORTRAN. Personal computing took off in the 1970s and 1980s with products from companies such as Apple, IBM, and others, bringing computing into homes and businesses. Networks, operating systems, and graphical interfaces transformed how people interacted with machines.

Into the 21st century, computing continues to evolve with Internet-connected systems, powerful mobile devices, and research into quantum computing. From mechanical calculators to AI-driven systems, computers have become indispensable tools, their history reflecting a layered, collaborative process of innovation.