This Tech Xplore article discusses a new study showing how physical neural networks (PNNs), i.e., analog AI systems built using photonic (light-based) hardware, can make training AI much more energy-efficient. Traditional neural networks rely heavily on digital computation, which consumes large amounts of electricity, especially during training. PNNs aim to reduce that strain.

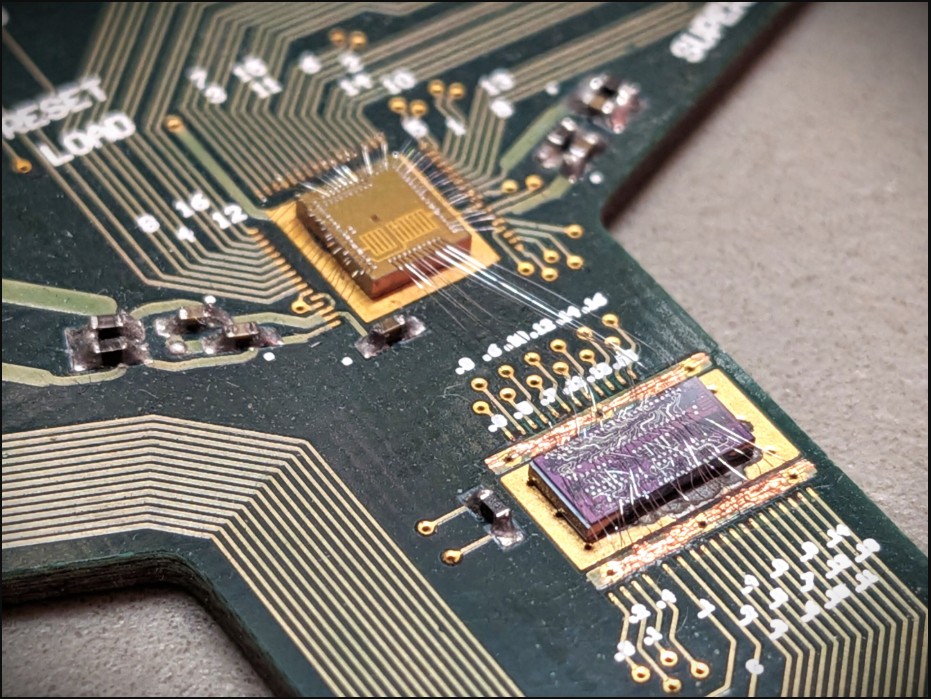

A multidisciplinary team spanning Politecnico di Milano, EPFL, Stanford, Cambridge, and the Max Planck Institute has published research in Nature demonstrating a photonic chip that can perform mathematical operations such as addition and multiplication using light interference on silicon microchips just a few square millimeters in size.

Crucially, the paper introduces an in situ training method for these physical neural networks, meaning that some or all aspects of training happen in the analog photonic hardware itself (with light signals), rather than completely in digital simulations. This cuts out energy losses and time delays due to conversions between analog and digital domains.

The benefits are two-fold: less energy consumption and faster processing. This approach could enable AI model training and inference locally on devices rather than sending everything to remote data centers, useful for edge computing, autonomous cars, intelligent sensors, etc.

However, physical neural networks are still experimental; they need to address issues such as noise, precision, material limitations, and scalability. But the study argues that as these technologies mature, they could help make AI far more sustainable by lowering energy costs and reducing reliance on massive cloud-based digital computing.