NVIDIA released CUDA 13.1, defining it as an important update to the platform first introduced in 2006. The version adds CUDA Tile, a programming model that shifts GPU development toward higher level data structures and reduces the need for thread control.

Tile Model Changes How Developers Organize GPU Work

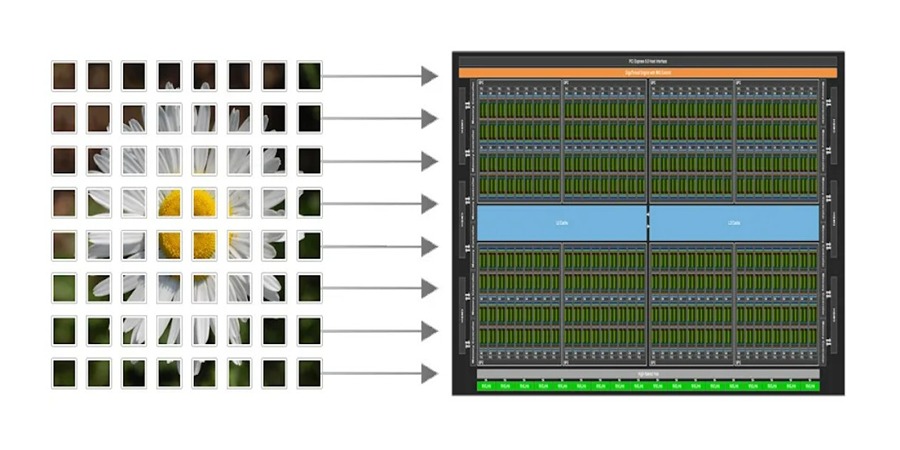

CUDA Tile lets developers use structured tiles rather than thousands of threads. NVIDIA said the model removes low-level tasks that require careful tuning, including thread coordination, memory alignment, and synchronization. NVIDIA said this change lets teams focus on algorithms instead of hardware details.

NVIDIA also said tiles group related data into blocks that represent parallel work clearly. The structure reduces repetitive code while keeping performance control with the developer. CUDA Tile also adapts to different NVIDIA GPU architectures, including future versions. The company said this gives developers a predictable path as hardware evolves.

CUDA Tile supports thread-block clusters, distributed shared memory, asynchronous operations, and cooperative scheduling. NVIDIA said these features help scale workloads across larger GPU systems and reduce overhead from managing threads by hand.

CUDA 13.1 Improves Compilers, Tools, and Libraries

CUDA 13.1 adds upgrades across compilers, libraries, debugging tools, and performance analyzers. NVIDIA strengthened compiler optimization for memory operations and expanded support for C++ standard-parallel algorithms. Updated profiling tools help developers track tile behavior during execution and improve tuning.

The update also improves how CUDA works with cuBLAS, cuDNN, TensorRT, and Triton. NVIDIA said this helps teams running AI inference, scientific computing, robotics simulation, and digital-twin workloads achieve consistent performance across GPU-accelerated frameworks.

For high-performance and AI workloads, CUDA 13.1 adds faster data paths for tensor operations, improved memory management, and better multi-GPU scaling. NVIDIA said these changes support large language models, molecular dynamics, fluid simulation, seismic imaging, and real-time robotics.

Adoption Expands Across Engineering and AI Teams

CUDA Tile supports engineering tasks such as finite-element solvers, CFD modeling, and simulation pipelines that often require GPU tuning. Imaging and vision teams can simplify filtering, feature extraction, and sensor fusion by avoiding low-level thread control.

AI researchers can write training and inference code that adapts to new GPU architectures without rewriting kernels. Robotics teams can control workloads from edge devices to cloud systems. Teams in energy, automotive, aerospace, and manufacturing can use CUDA 13.1 to speed digital twins, physics models, and optimization work.

CUDA Remains a Core Platform for Accelerated Computing

CUDA supports more than 6 million developers in research, enterprise, and scientific work. CUDA Tile lowers the effort required for GPU programming while supporting performance demands for advanced AI and simulation. CUDA 13.1 is available through NVIDIA’s developer platform with documentation and migration tools.

Source: NVIDIA

About NVIDIA

![]()

NVIDIA, founded in 1993 and headquartered in Santa Clara, CA, designs and manufactures graphics processing units, systems on chips, networking hardware, and AI intelligence software such as CUDA. Its products serve industries including gaming, data centers, autonomous vehicles, professional visualization, robotics, health care, and energy. The company introduced the GPU in 1999 and later expanded into accelerated computing and AI infrastructure. In gaming, its GPUs support high-performance rendering, while in AI and high-performance computing, its systems provide the infrastructure for training and deploying large-scale models. NVIDIA also develops tools for robotics and autonomous driving.