PartCrafter is a new open-source generative AI system that converts a single RGB image into multiple structured 3D part meshes in seconds, says this interesting article on 3D Printing Industry. Developed by a collaboration between Peking University, ByteDance, and Carnegie Mellon University, PartCrafter is detailed in a June 2025 arXiv release and a public demo.

What sets it apart:

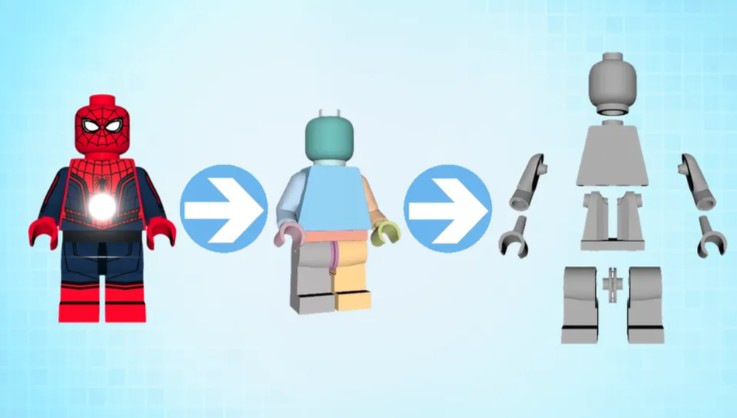

- Compositional latent diffusion transformer architecture: Unlike preceding methods that segment before reconstructing, PartCrafter embeds part-awareness within its diffusion process—directly generating separate mesh parts without manual segmentation.

- Multi-part aligned mesh output: It can output up to 16 discrete, non-overlapping meshes, all aligned in a shared coordinate frame, simplifying assembly or further CAD processing.

- Benchmark performance: With supervised training on ~50,000 part-annotated models (Objaverse, ShapeNet, ABO), PartCrafter achieves an L₂ chamfer distance of 0.1726 vs. HoloPart’s 0.1916, while slashing generation time from ~18 minutes to ~34 seconds on a single H20 GPU (~32× faster).

Engineers can now go from just an image to a fabrication-ready multi-part mesh extremely quickly—ideal for rapid prototyping, reverse engineering, or feeding CAD and simulation workflows. The open-source, MIT-licensed release supports integration into existing additive manufacturing pipelines and encourages customization. Future plans include scaling training to millions of annotated parts and embedding physical priors to meet real-world tolerances in assembly contexts.

PartCrafter empowers engineers by bridging the gap between 2D visual cues and structured 3D models. It delivers fast, part-aware mesh generation that’s ready for actionable CAD workflows—accelerating design-to-print cycles and enhancing efficiency in additive manufacturing.

PartCrafter is present at GitHub.