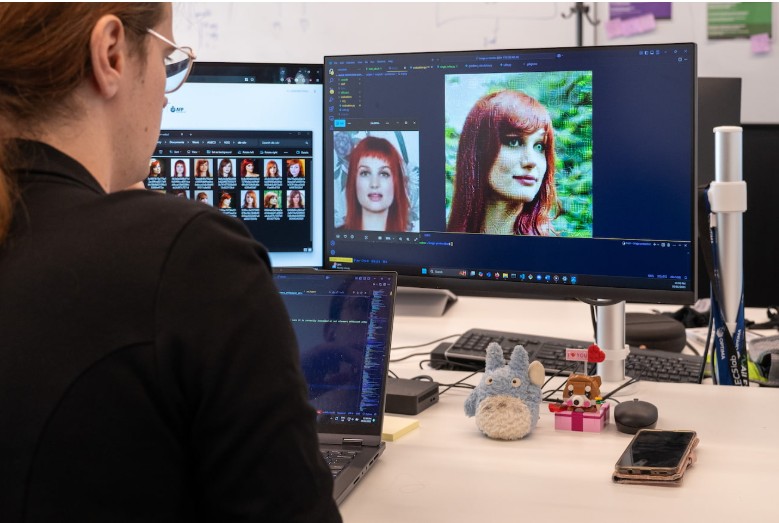

A joint effort between Monash University’s AI for Law Enforcement and Community Safety (AiLECS) Lab and the AFP has produced a prototype tool, Silverer, that uses data-poisoning techniques to foil AI-generated image abuse. The key idea: before someone uploads photos online, Silverer adds imperceptible modifications to the pixel data. These changes cause deep-learning models that attempt to use those images for malicious purposes, such as non-consensual deepfakes or child-abuse content, to generate distorted, low-quality outputs instead of usable images, tells this interesting article from Monash University.

Silverer has been under development for at least 12 months. According to AiLECS lead Elizabeth Perry (PhD candidate), the name reflects the silver base of old mirrors, with the analogy being that the protected image behaves like a mirror under a thin silver coating: attackers looking through end up with a useless reflection. The tool is intended for civilians and social-media users, enabling them to “poison” their own images before posting, thereby complicating efforts by criminals to train or fine-tune generative-AI systems using victim data.

From a design-engineering perspective, this work matters for multiple reasons. It opens a new layer of defense at the interface between image creation and AI abuse, linking pixel-level manipulation with system-wide trust. It also sets a precedent for proactive asset-protection tools rather than purely reactive forensics. For system architects, the takeaway is that introducing engineered noise or adversarial patterns at upload time can shift the burden from post-fact-deep-fake detection to preemptive prevention.

Silverer represents a proactive approach to deep-fake mitigation: by “poisoning” image data at the source, it raises the technical barrier for misuse. While still in the prototype phase and limited in scope, this initiative marks a step toward embedding defense mechanisms in user workflows rather than relying solely on detection after damage is done.