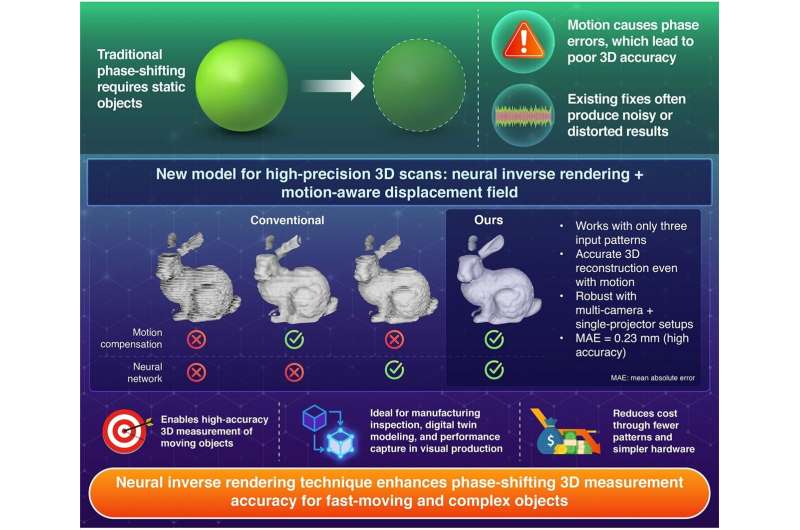

Researchers at the Institute of Science Tokyo have developed a new structured-light method that significantly improves 3D shape capture of moving objects, tells Tech Xplore. Traditional phase-shifting techniques struggle with motion, since they rely on sequential projection and capture of multiple sinusoidal patterns, and even slight movement disrupts alignment and introduces error.

The new system uses a neural inverse-rendering framework that simultaneously models both the geometry of the object and its motion (expressed as a displacement field). In this way, the algorithm compensates for movement between pattern projections. Remarkably, it accomplishes high-resolution, high-accuracy 3D reconstruction using only three projection patterns, the same number typically employed for stationary targets in conventional phase-shifting.

The experimental setup involved a single projector and two cameras in a multi-view configuration. With this, the researchers achieved precise reconstructions even under dynamic conditions. They presented their findings at the IEEE/CVF International Conference on Computer Vision (ICCV) 2025 recently.

Applications are significant for industries where parts move or change during measurement, such as manufacturing inspection, digital twin construction, or performance capture in visual production. By reducing the number of projections while handling motion, the technique opens up 3D sensing for situations previously unworkable with phase-shifting methods.

This indicates a major shift: structured-light systems may now capture dynamic scenes with fewer patterns and less susceptibility to motion blur or alignment error. This bridges the gap between high-precision lab setups and real-world dynamic measurement tasks.