A new direction in robotics is emerging, one that shifts intelligence from language-based reasoning to visual understanding rooted in real-world physics. Researchers have developed a video-based AI system that allows robots to anticipate the outcomes of their actions by “imagining” future scenarios before executing them, tells Tech Xplore.

The system is built on large-scale training using internet video data rather than text. While earlier robot models relied heavily on language to interpret instructions, this approach recognizes that language alone provides limited insight into how the physical world behaves. Video, by contrast, captures motion, object interaction, and cause-and-effect relationships, offering a richer foundation for learning.

At the core of the method is a “world model,” an internal representation that enables robots to simulate possible future states. By generating short imagined video sequences, the robot can evaluate different actions and predict their consequences before committing to a movement. This ability to forecast outcomes allows for more adaptive and reliable behavior, especially in unfamiliar environments.

The approach builds on earlier vision-language-action systems but addresses a key limitation: generalization. Traditional systems often struggle when faced with new objects or tasks outside their training data. By learning from diverse video inputs, the new model develops a broader understanding of physical interactions, enabling robots to apply knowledge more flexibly across situations.

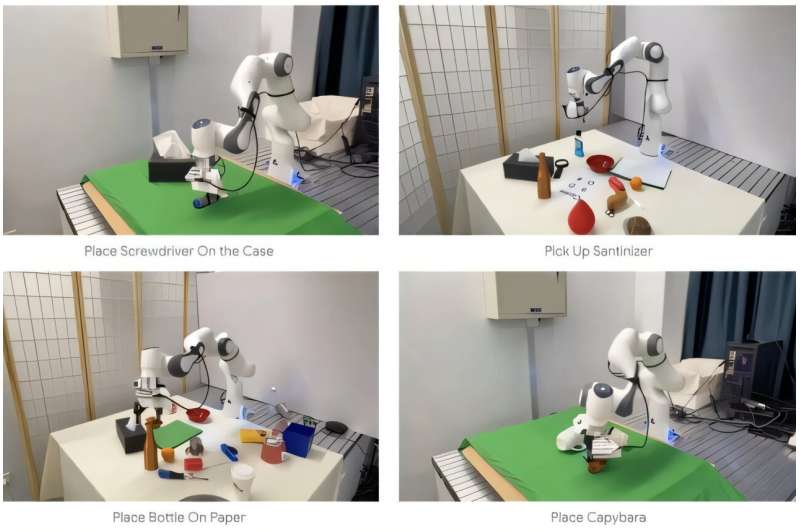

In demonstrations, robots equipped with this capability successfully performed tasks such as manipulating objects and responding to prompts in ways that required contextual understanding rather than predefined rules. The system’s ability to anticipate outcomes reduces trial-and-error behavior, making actions more efficient and purposeful.

The implications extend beyond individual tasks. By equipping robots with a form of “visual imagination,” researchers are moving closer to machines that can operate autonomously in complex, changing environments. This represents a shift from reactive systems to predictive ones, where decision-making is guided by simulated foresight.

As robotics continues to evolve, integrating perception, prediction, and action, such models could play a central role in enabling more general-purpose, adaptable machines.