Researchers at New York University’s General-Purpose Robotics and AI Lab, led by Lerrel Pinto, have developed EgoZero, a system that teaches robots everyday tasks without relying on robot-generated training data. Instead, EgoZero uses Meta’s Project Aria smart glasses, which capture a first-person, or egocentric, view of human demonstrations. This perspective provides rich, contextual information because the camera moves naturally with the wearer, focusing on objects and actions in real time, reports IEEE Spectrum.

Traditional robot training methods often demand large datasets recorded directly by robots or from external cameras, making the process time-consuming, costly, and inflexible. EgoZero overcomes these barriers by allowing a person to wear smart glasses and demonstrate tasks—such as placing bread on a plate or opening an oven door—for as little as 20 minutes. From these short demonstrations, robots achieve up to a 70% success rate in replicating the task, showing how efficient the method is.

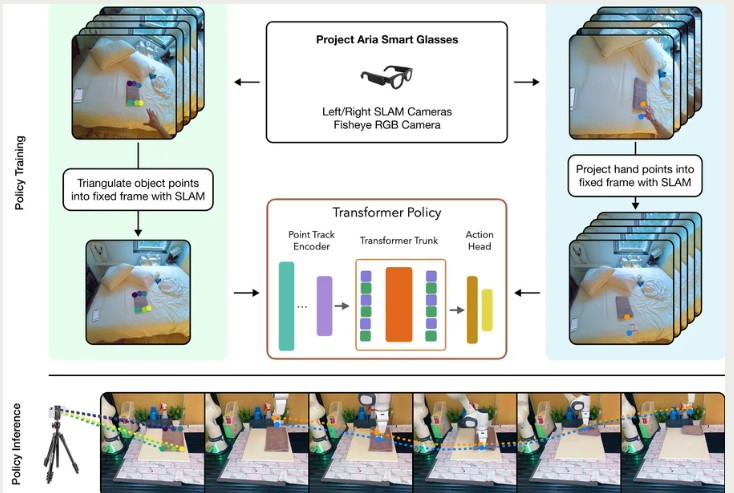

A key innovation lies in how EgoZero translates human actions into robot-compatible motions. Instead of relying on raw video frames, which contain discrepancies between human hands and robot arms, the system extracts and tracks 3D “action points”—key coordinates that define relevant movements and interactions. These points are then mapped into trajectories that robots can execute, regardless of differences in body structure or environment. This morphology-agnostic approach allows robots to generalize learned tasks across varying contexts.

By eliminating the need for robot-specific training data, EgoZero presents a scalable and portable solution for robot learning. It reduces barriers to teaching general-purpose robots, enabling faster adaptation to real-world scenarios where humans and machines collaborate. In the long run, this approach could accelerate progress toward robots capable of performing a wide range of household and industrial tasks by simply watching and learning from people.