Training modern AI systems has long required a trade-off between size, speed, and performance. Larger models tend to be more capable but demand vast computational resources, while smaller models are faster but often less accurate. A new technique developed by researchers at MIT’s Computer Science and Artificial Intelligence Laboratory reframes this compromise by shrinking models as they learn, rather than after training is complete, tells MIT News.

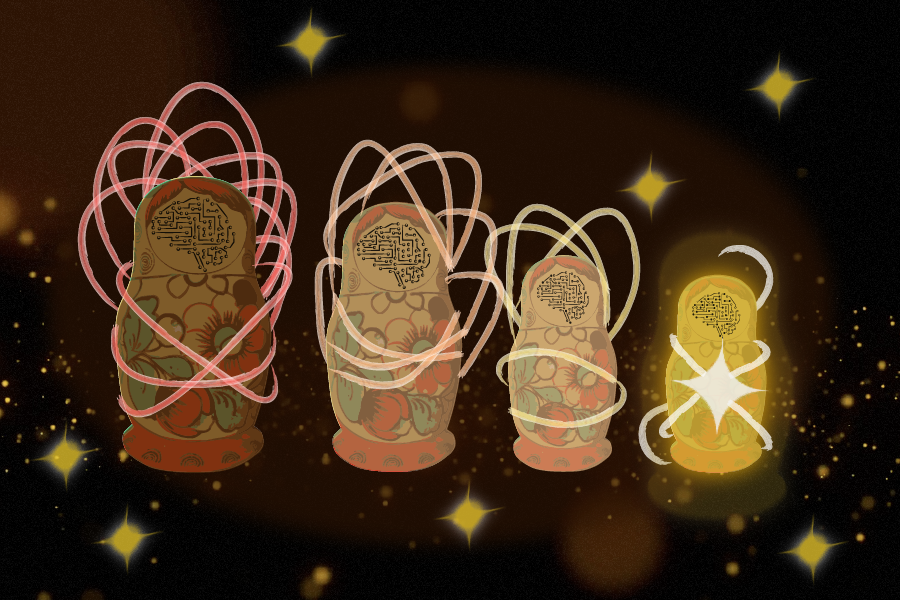

The method, called CompreSSM, applies principles from control theory to analyze a model’s internal structure during training. Instead of building a full-scale model and trimming it later, the system identifies which components contribute meaningfully to performance and removes unnecessary elements early in the learning process. This allows the model to gradually become leaner while still improving its capabilities.

Traditional approaches rely on either training large models and pruning them afterward or designing smaller models from the start, often sacrificing accuracy. CompreSSM avoids both limitations by integrating compression directly into training. As a result, models maintain high performance while reducing computational demands, energy use, and training time.

The technique is particularly effective for state-space models, a class of AI architectures used in applications such as language processing, audio generation, and robotics. By continuously evaluating which parts of the system are “pulling their weight,” CompreSSM ensures that only the most relevant components are retained.

Beyond improving efficiency, the approach could reshape how AI systems are developed and deployed. Leaner models lower infrastructure costs, making advanced AI more accessible for organizations with limited resources. Faster training cycles also enable quicker iteration and innovation.

In practical terms, this advancement supports a wide range of applications, from real-time language models and intelligent assistants to robotics and edge computing systems where processing power is constrained. It also holds promise for reducing the environmental footprint of AI by cutting energy consumption across training and deployment.

By embedding optimization directly into the learning process, CompreSSM points toward a future where AI systems are not only more capable but also more efficient by design.