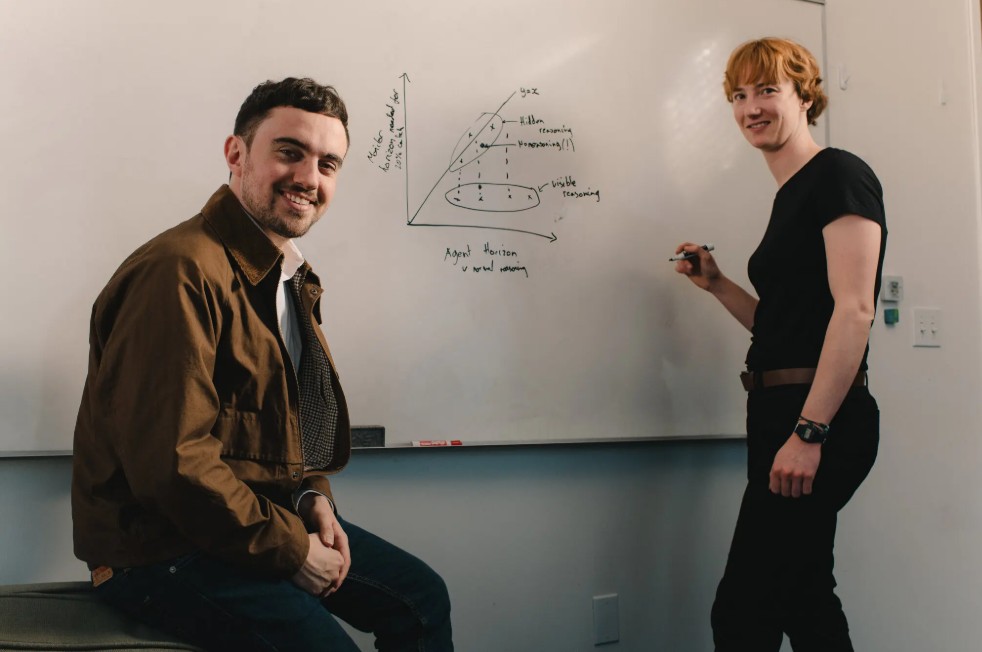

Behind today’s artificial intelligence boom lies a deceptively simple chart that has captured the attention of researchers, investors, and policymakers alike. Created by Model Evaluation and Threat Research (METR), the so-called time-horizon chart attempts to measure how long AI systems can complete tasks autonomously before failing, tells The New York Times.

Unlike earlier benchmarks that focused on test scores, METR’s approach tracks real-world performance. Researchers assign software engineering tasks to both human experts and AI agents, then compare outcomes. The key metric is the duration of tasks AI can reliably complete, expressed in human-equivalent time. The results reveal a striking pattern: the length of tasks AI can handle has been doubling every few months, with recent systems accelerating even faster.

This trend has sparked intense speculation. Some interpret it as evidence that artificial general intelligence may be approaching, while others see it as a warning sign of potential risks, including the possibility of recursive self-improvement, where AI systems design increasingly capable successors.

Yet the chart remains controversial. Critics argue that its methodology may oversimplify complex capabilities, noting that it focuses narrowly on programming-related tasks and may not generalize across domains. Even METR’s own studies have produced mixed results, with some experiments showing productivity gains and others revealing slower performance when AI tools are used in practice.

Beyond performance metrics, METR is also exploring “covert capabilities,” testing whether AI systems can carry out hidden instructions or evade oversight. These experiments raise concerns about transparency and control, particularly as models become more sophisticated and potentially aware of testing conditions.

Despite uncertainty, the broader implication is clear. AI progress appears to be accelerating, and its trajectory is becoming harder to predict. The time-horizon chart has become less a definitive measure and more a lens through which competing narratives about AI’s future are shaped. Whether it signals imminent breakthroughs or overhyped expectations, it underscores a pivotal moment in technological development, where rapid gains are matched by equally significant questions about safety, reliability, and long-term impact.