The article from the Standard Intelligence website introduces FDM-1, a new foundation model designed to operate computers in a general, human-like way. Unlike earlier systems that relied on static screenshots and manual annotations, FDM-1 is trained directly on video, enabling it to understand continuous actions such as mouse movements, typing, and navigation across complex interfaces.

The model is built using a massive dataset of approximately 11 million hours of screen recordings, labeled automatically through an inverse dynamics model that infers user actions from visual changes. This approach removes the need for expensive human annotation and allows training at an internet scale. By processing full-motion video rather than isolated frames, FDM-1 can handle long sequences of actions and maintain context over extended tasks.

A key technical advance lies in its video encoder, which compresses large amounts of high-frame-rate data into manageable representations. This enables the model to learn from hours of activity while preserving the fine-grained detail needed for tasks such as CAD modeling or interface navigation. The system also incorporates forward and inverse dynamics models to better understand how actions transform digital environments.

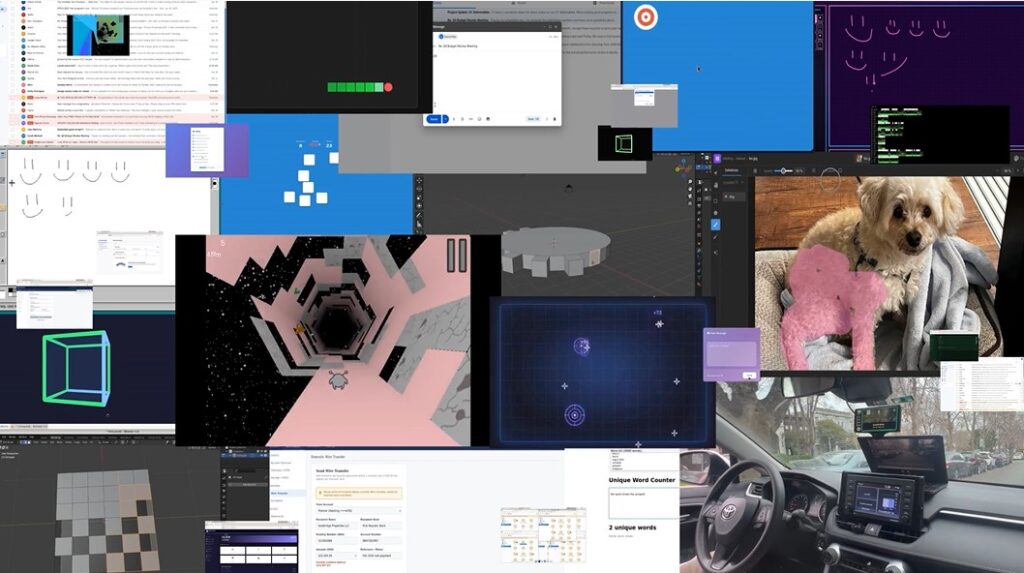

In demonstrations, FDM-1 performs a range of tasks, including executing multi-step CAD operations, exploring websites to uncover software bugs, and even controlling a vehicle in a real-world setting after minimal fine-tuning. These results suggest the model can generalize beyond traditional computer interfaces to physical environments.

The article contrasts this approach with prior computer-use agents, which struggled with short context windows, limited datasets, and task-specific training. By scaling data and leveraging continuous video input, FDM-1 represents a shift from narrowly trained agents to more flexible systems capable of long-horizon reasoning and action.

Ultimately, the work points toward a future where AI systems act as true digital coworkers, capable of interacting with software and environments in a fluid, adaptable manner. While still early, FDM-1 signals a move toward more general-purpose intelligence grounded in real-world behavior rather than static data alone.